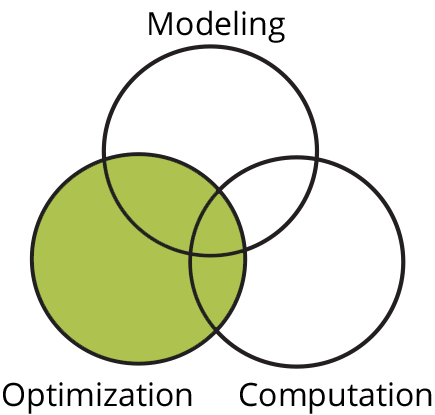

Whether a mathematical model exists or one is inferred from data, it is often of critical interest to determine a set of problem parameters that optimize solution properties. A goal of research in the area of optimization, and a second pillar of the MOCA center, is the design and analysis of algorithms that can find local or global optimizers to problems from models or data.